Kubelet Service Kill

Introduction¶

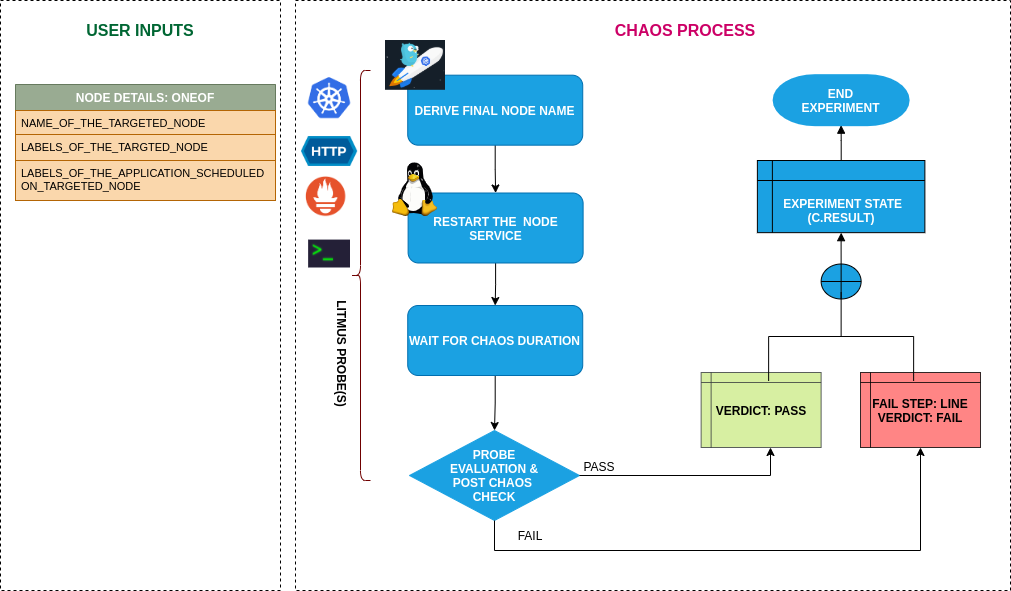

- This experiment Causes the application to become unreachable on account of node turning unschedulable (NotReady) due to kubelet service kill.

- The kubelet service has been stopped/killed on a node to make it unschedulable for a certain duration i.e TOTAL_CHAOS_DURATION. The application node should be healthy after the chaos injection and the services should be reaccessable.

- The application implies services. Can be reframed as: Test application resiliency upon replica getting unreachable caused due to kubelet service down.

Scenario: Kill the kubelet service of the node

Uses¶

View the uses of the experiment

coming soon

Prerequisites¶

Verify the prerequisites

- Ensure that Kubernetes Version > 1.16

- Ensure that the Litmus Chaos Operator is running by executing

kubectl get podsin operator namespace (typically,litmus).If not, install from here - Ensure that the

kubelet-service-killexperiment resource is available in the cluster by executingkubectl get chaosexperimentsin the desired namespace. If not, install from here - Ensure that the node specified in the experiment ENV variable

TARGET_NODE(the node for which kubelet service need to be killed) should be cordoned before execution of the chaos experiment (before applying the chaosengine manifest) to ensure that the litmus experiment runner pods are not scheduled on it / subjected to eviction. This can be achieved with the following steps:- Get node names against the applications pods:

kubectl get pods -o wide - Cordon the node

kubectl cordon <nodename>

- Get node names against the applications pods:

Default Validations¶

View the default validations

The target nodes should be in ready state before and after chaos injection.

Minimal RBAC configuration example (optional)¶

NOTE

If you are using this experiment as part of a litmus workflow scheduled constructed & executed from chaos-center, then you may be making use of the litmus-admin RBAC, which is pre installed in the cluster as part of the agent setup.

View the Minimal RBAC permissions

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kubelet-service-kill-sa

namespace: default

labels:

name: kubelet-service-kill-sa

app.kubernetes.io/part-of: litmus

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: kubelet-service-kill-sa

labels:

name: kubelet-service-kill-sa

app.kubernetes.io/part-of: litmus

rules:

# Create and monitor the experiment & helper pods

- apiGroups: [""]

resources: ["pods"]

verbs: ["create","delete","get","list","patch","update", "deletecollection"]

# Performs CRUD operations on the events inside chaosengine and chaosresult

- apiGroups: [""]

resources: ["events"]

verbs: ["create","get","list","patch","update"]

# Fetch configmaps details and mount it to the experiment pod (if specified)

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["get","list",]

# Track and get the runner, experiment, and helper pods log

- apiGroups: [""]

resources: ["pods/log"]

verbs: ["get","list","watch"]

# for creating and managing to execute comands inside target container

- apiGroups: [""]

resources: ["pods/exec"]

verbs: ["get","list","create"]

# for configuring and monitor the experiment job by the chaos-runner pod

- apiGroups: ["batch"]

resources: ["jobs"]

verbs: ["create","list","get","delete","deletecollection"]

# for creation, status polling and deletion of litmus chaos resources used within a chaos workflow

- apiGroups: ["litmuschaos.io"]

resources: ["chaosengines","chaosexperiments","chaosresults"]

verbs: ["create","list","get","patch","update","delete"]

# for experiment to perform node status checks

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get","list"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubelet-service-kill-sa

labels:

name: kubelet-service-kill-sa

app.kubernetes.io/part-of: litmus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubelet-service-kill-sa

subjects:

- kind: ServiceAccount

name: kubelet-service-kill-sa

namespace: default

Use this sample RBAC manifest to create a chaosServiceAccount in the desired (app) namespace. This example consists of the minimum necessary role permissions to execute the experiment.

Experiment tunables¶

check the experiment tunables

Mandatory Fields

| Variables | Description | Notes |

|---|---|---|

| TARGET_NODE | Name of the target node | |

| NODE_LABEL | It contains node label, which will be used to filter the target node if TARGET_NODE ENV is not set | It is mutually exclusive with the TARGET_NODE ENV. If both are provided then it will use the TARGET_NODE |

Optional Fields

| Variables | Description | Notes |

|---|---|---|

| TOTAL_CHAOS_DURATION | The time duration for chaos insertion (seconds) | Defaults to 60s |

| LIB | The chaos lib used to inject the chaos | Defaults to litmus |

| LIB_IMAGE | The lib image used to inject kubelet kill chaos the image should have systemd installed in it. | Defaults to ubuntu:16.04 |

| RAMP_TIME | Period to wait before injection of chaos in sec |

Experiment Examples¶

Common and Node specific tunables¶

Refer the common attributes and Node specific tunable to tune the common tunables for all experiments and node specific tunables.

Kill Kubelet Service¶

It contains name of target node subjected to the chaos. It can be tuned via TARGET_NODE ENV.

Use the following example to tune this:

# kill the kubelet service of the target node

apiVersion: litmuschaos.io/v1alpha1

kind: ChaosEngine

metadata:

name: engine-nginx

spec:

engineState: "active"

annotationCheck: "false"

chaosServiceAccount: kubelet-service-kill-sa

experiments:

- name: kubelet-service-kill

spec:

components:

env:

# name of the target node

- name: TARGET_NODE

value: 'node01'

- name: TOTAL_CHAOS_DURATION

value: '60'