Pod Autoscaler

Introduction¶

-

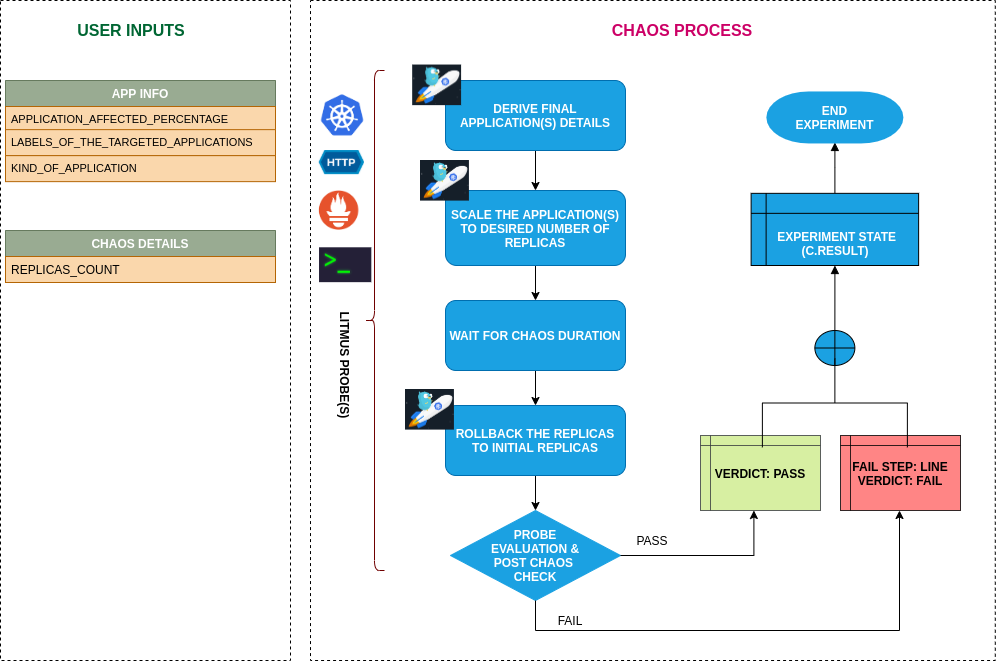

The experiment aims to check the ability of nodes to accommodate the number of replicas a given application pod.

-

This experiment can be used for other scenarios as well, such as for checking the Node auto-scaling feature. For example, check if the pods are successfully rescheduled within a specified period in cases where the existing nodes are already running at the specified limits.

Scenario: Scale the replicas

Uses¶

View the uses of the experiment

coming soon

Prerequisites¶

Verify the prerequisites

- Ensure that Kubernetes Version > 1.16

- Ensure that the Litmus Chaos Operator is running by executing

kubectl get podsin operator namespace (typically,litmus).If not, install from here - Ensure that the

pod-autoscalerexperiment resource is available in the cluster by executingkubectl get chaosexperimentsin the desired namespace. If not, install from here

Default Validations¶

View the default validations

The application pods should be in running state before and after chaos injection.

Minimal RBAC configuration example (optional)¶

NOTE

If you are using this experiment as part of a litmus workflow scheduled constructed & executed from chaos-center, then you may be making use of the litmus-admin RBAC, which is pre installed in the cluster as part of the agent setup.

View the Minimal RBAC permissions

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: pod-autoscaler-sa

namespace: default

labels:

name: pod-autoscaler-sa

app.kubernetes.io/part-of: litmus

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: pod-autoscaler-sa

labels:

name: pod-autoscaler-sa

app.kubernetes.io/part-of: litmus

rules:

# Create and monitor the experiment & helper pods

- apiGroups: [""]

resources: ["pods"]

verbs: ["create","delete","get","list","patch","update", "deletecollection"]

# Performs CRUD operations on the events inside chaosengine and chaosresult

- apiGroups: [""]

resources: ["events"]

verbs: ["create","get","list","patch","update"]

# Fetch configmaps details and mount it to the experiment pod (if specified)

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["get","list",]

# Track and get the runner, experiment, and helper pods log

- apiGroups: [""]

resources: ["pods/log"]

verbs: ["get","list","watch"]

# for creating and managing to execute comands inside target container

- apiGroups: [""]

resources: ["pods/exec"]

verbs: ["get","list","create"]

# performs CRUD operations on the deployments and statefulsets

- apiGroups: ["apps"]

resources: ["deployments","statefulsets"]

verbs: ["list","get","patch","update"]

# for configuring and monitor the experiment job by the chaos-runner pod

- apiGroups: ["batch"]

resources: ["jobs"]

verbs: ["create","list","get","delete","deletecollection"]

# for creation, status polling and deletion of litmus chaos resources used within a chaos workflow

- apiGroups: ["litmuschaos.io"]

resources: ["chaosengines","chaosexperiments","chaosresults"]

verbs: ["create","list","get","patch","update","delete"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: pod-autoscaler-sa

labels:

name: pod-autoscaler-sa

app.kubernetes.io/part-of: litmus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: pod-autoscaler-sa

subjects:

- kind: ServiceAccount

name: pod-autoscaler-sa

namespace: default

Experiment tunables¶

check the experiment tunables

Mandatory Fields

| Variables | Description | Notes |

|---|---|---|

| REPLICA_COUNT | Number of replicas upto which we want to scale | nil |

Optional Fields

| Variables | Description | Notes |

|---|---|---|

| TOTAL_CHAOS_DURATION | The timeout for the chaos experiment (in seconds) | Defaults to 60 |

| LIB | The chaos lib used to inject the chaos | Defaults to litmus |

RAMP_TIME | Period to wait before and after injection of chaos in sec |

Experiment Examples¶

Common and Pod specific tunables¶

Refer the common attributes and Pod specific tunable to tune the common tunables for all experiments and pod specific tunables.

Replica counts¶

It defines the number of replicas, which should be present in the targeted application during the chaos. It can be tuned via REPLICA_COUNT ENV.

Use the following example to tune this:

# provide the number of replicas

apiVersion: litmuschaos.io/v1alpha1

kind: ChaosEngine

metadata:

name: engine-nginx

spec:

engineState: "active"

annotationCheck: "false"

appinfo:

appns: "default"

applabel: "app=nginx"

appkind: "deployment"

chaosServiceAccount: pod-autoscaler-sa

experiments:

- name: pod-autoscaler

spec:

components:

env:

# number of replica, needs to scale

- name: REPLICA_COUNT

value: '3'

- name: TOTAL_CHAOS_DURATION

value: '60'